University chatbot that answers prospective students, enrolled students, and faculty around the clock

Asyntai deploys a university chatbot across admissions sites, student portals, library pages, and IT helpdesks — fluent in 36 languages, trained on your catalog, handbook, and policy PDFs, never asleep when an applicant in Singapore is finishing a Common App essay at 3 a.m.

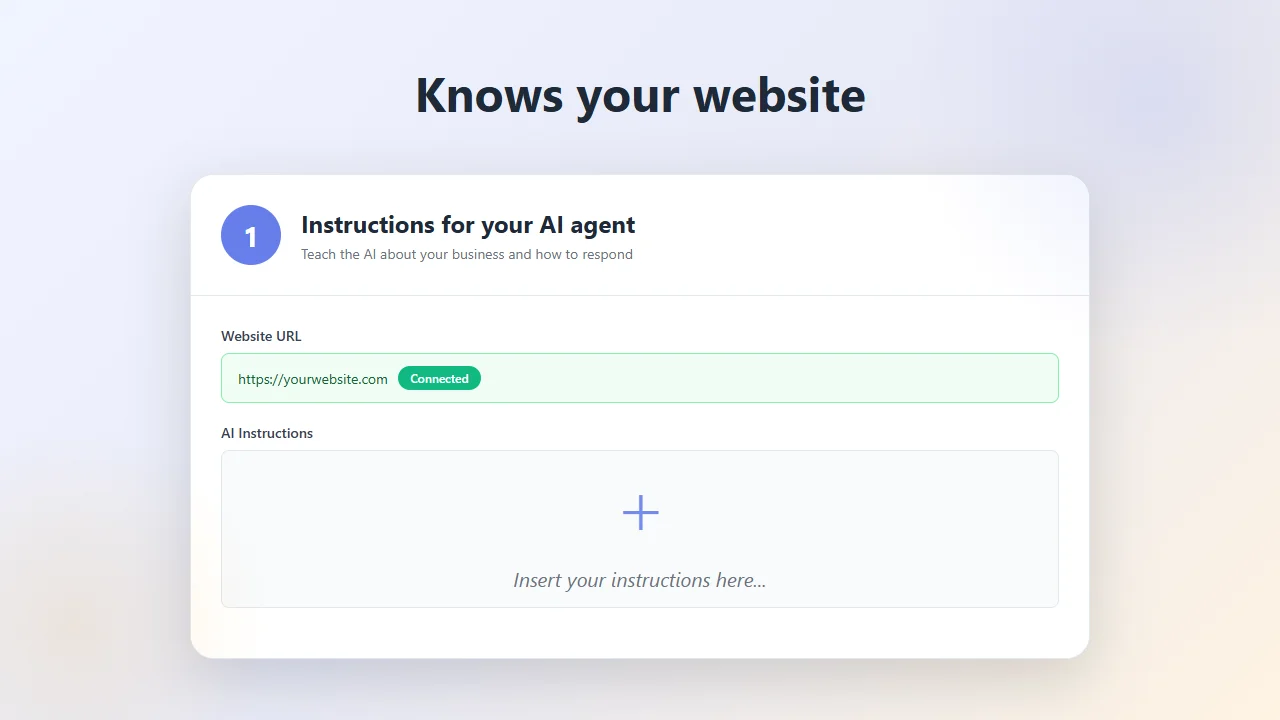

See the university chatbot trained on your institution's content

Enter your admissions or main university URL and the assistant will pull real questions from your pages

One assistant trained across admissions, registrar, bursar, and student affairs content

Higher education institutions publish their knowledge across dozens of micro-sites — admissions, graduate school, each college, housing, the registrar, the writing center, study abroad, career services. A university chatbot is only useful if a single conversation can touch all of those sources. Asyntai crawls your public domains, ingests uploaded PDFs of the academic catalog, student handbook, and policy manuals, and serves answers from the whole set as one unified campus brain.

- Multi-subdomain crawlPulls pages from admissions.edu, registrar.edu, financialaid.edu, studentlife.edu, and every individual school or department site — the chatbot stitches them into one queryable layer instead of ten separate silos.

- Catalog and handbook uploadDrop in the course catalog PDF, the undergraduate and graduate handbooks, residential life policies, and academic integrity documents — content that often lives behind PDFs rather than crawlable HTML is first-class input.

- Re-train each semesterWhen program requirements change, deadlines roll forward, or a new scholarship launches, refresh the training data from the dashboard and the chatbot reflects the update before the next enrollment cycle begins.

Fluent in 36 languages for applicants and students whose day starts when your offices close

Modern universities recruit globally and support a student body that spans every timezone on earth. Admissions officers can't staff a Mandarin-speaking counselor for Shanghai applicants and an Arabic-speaking counselor for Gulf applicants and hold late-night office hours for domestic students juggling jobs. A university chatbot closes that coverage gap without hiring — answering the same prospective student question in whatever language it was asked, whenever it was asked, with the same grounding in your official materials.

- 36 languages out of the boxYour catalog stays in English (or whichever primary language your institution publishes in); the assistant translates questions and answers on the fly — valuable for Chinese, Indian, Nigerian, Vietnamese, and Latin American applicant pipelines where English fluency varies.

- Always awakeA prospective student refreshing the application portal at 2 a.m. the night before a deadline gets the same accurate deadline answer as a student walking into the admissions office at noon — no queued tickets, no voicemail, no "office hours resume Monday."

- Escalation with full contextWhen a question needs a human — nuanced financial aid appeals, disability services accommodations, visa-specific queries — the conversation transcript and student email land in your inbox so the counselor picks up where the assistant paused.

Deploy the university chatbot across every campus web property with one snippet

Install is a single JavaScript tag, pasted into the template header of whichever university sites should carry the chatbot — the admissions funnel, the main .edu, the student portal, the library, the Moodle or Canvas login gateway. The same assistant appears everywhere it's loaded, so a student asking about add/drop deadlines on the registrar page sees the same trusted answer as one asking from the dining services site.

- Create an Asyntai account on the free plan (100 messages to pilot with) and grab your institution's snippet from the dashboard.

- Drop the snippet into the shared header of your CMS — Cascade, Drupal, WordPress Multisite, Sitecore, whatever powers the .edu — so it propagates across every page.

- Point the crawler at your primary URLs and upload your academic catalog, student handbook, and financial aid policy documents.

- Configure escalation rules for sensitive topics (mental health, Title IX, financial hardship) so those conversations route to qualified staff rather than the chatbot.

<script src="https://asyntai.com/widget.js"

data-id="your-university-id" async>

</script>

</head>

# University chatbot live across admissions, registrar, and student portals.

University chatbot — questions from higher ed IT, admissions, and student services

Common concerns raised by registrars, CIOs, and enrollment leadership before committing.

Can one chatbot serve both prospective applicants and currently enrolled students?

Yes, and this is the typical higher ed deployment. The same assistant handles an undecided high school junior asking about the pre-med track and a returning sophomore asking how to waitlist into a closed section. Because the chatbot is trained on the full catalog, handbook, and policy corpus, it understands the vocabulary of both audiences. When your student portal passes the logged-in student's record through User Context (a Standard- and Pro-tier capability) — program, year, residency status — enrolled-student replies get personalized while prospective-visitor replies stay general.

How do we keep the chatbot from giving wrong answers on sensitive topics like financial aid or immigration?

Two mechanisms. First, custom instructions let you tell the assistant exactly how to handle sensitive categories — for example, "Never quote a specific financial aid award; always direct the student to the aid office" or "For F-1 visa questions, summarize general policy and route to the international student office." Second, escalation rules capture the email and transcript on those flagged topics and route them to the right specialist, so the student gets a human follow-up rather than a confident but unverified AI reply.

What about FERPA and student data privacy?

Public-facing chatbot conversations — prospective students browsing anonymously, general policy questions — contain no protected educational records. For logged-in student portal deployments where User Context passes identifying information, treat the Asyntai widget the same as any third-party tool that receives FERPA-adjacent data: review your data processing terms, document the use case, and limit the context payload to what's actually needed for the reply (program and year are usually enough; GPA and transcript data generally aren't). Data is encrypted in transit and at rest.

Can the chatbot handle our college-specific content — each school's unique requirements?

Yes. A research university typically runs separate micro-sites for the College of Arts and Sciences, the business school, the law school, engineering, nursing, and so on, each with its own curriculum and prerequisites. The crawler pulls content from all of them and the assistant references the correct school when a question is school-specific. If a student asks "what prerequisites does the MBA program require," the answer pulls from the business school's pages rather than conflating it with undergraduate admissions requirements.

Which languages are most used by our international applicants?

All 36 supported languages are available, but the ones most higher ed clients report getting mileage from are Mandarin, Spanish, Arabic, Vietnamese, Portuguese, Korean, Hindi, and French — reflecting typical international enrollment pipelines. The assistant detects the applicant's language automatically from their first message, so you don't need to run separate language variants of the chatbot or ask visitors to pick a flag.

Does this integrate with Banner, Workday Student, or our SIS?

Direct SIS integration isn't required for most use cases — the chatbot answers informational questions from your catalog and published policies, which is the bulk of inbound volume. For personalized replies ("when does my registration window open?"), the User Context feature lets your portal pass the relevant fields from Banner or Workday into the assistant's context before the widget loads. Actions that would write back to the SIS — actually registering for a course, submitting a form — remain in your SIS where they belong; the chatbot points the student to the right workflow.

How do we deploy across multiple campus subdomains on our plan?

Free includes a single site slot; Starter raises the cap to two, Standard to three, and Pro to ten. For a single unified university chatbot that appears across many subdomains of one .edu, most institutions treat that as one site and use the same snippet everywhere — this works under a single plan slot. If you need fully separate chatbot instances with different training, branding, and escalation rules — for example, a law school with its own dedicated assistant, or a continuing education division operating under a different brand — each of those counts as a separate site against the plan's multi-site allowance.

What does pricing look like for a university-scale deployment?

The free plan covers 100 messages, useful for a pilot with admissions or IT before broader rollout. Paid tiers open at $39 monthly for a 2,500-message allowance. Mid-size and large universities with active admissions funnels and busy student portals typically land on higher message tiers; billing is driven by how many conversations the assistant handles, not by enrollment size or staff seats, so a small liberal arts college and a large state university use the same plan tiers differently based on how much traffic each generates.

University chatbot — how higher education actually uses it

Every university deals with the same paradox: the institution publishes enormous amounts of information — degree requirements, enrollment deadlines, tuition schedules, housing policies, academic integrity codes, international student visa guidance — and somehow, despite all that publishing, the admissions office fields the same two hundred questions every week, the registrar counter stays busy during add/drop, and the financial aid office's phone lines stack up every January. The content exists; the discovery problem is what breaks. A university chatbot exists to solve the discovery problem specifically. Instead of asking a prospective applicant to navigate seven menu levels and find the right PDF, the chatbot reads all of it and answers the question the applicant actually asked, in the language they asked it, at whatever hour the question arrived.

Admissions is usually the first department that sees measurable relief. Inbound volume to admissions offices is seasonal, predictable in shape but unpredictable in exact timing, and heavily weighted toward a small number of recurring questions — application deadlines, standardized test policies, transfer credit rules, tuition and fees, scholarship availability, campus visit scheduling, what to submit if a transcript is late. Human counselors end up answering these same questions several dozen times a day during peak cycles, which is low-value work that prevents them from doing the higher-value work: reading essays, making admit/deny calls, advising borderline applicants. Dropping a university chatbot on the admissions funnel reassigns the repetitive tier to automation and lets counselors spend their hours where their judgment matters.

The international applicant pipeline is where a university chatbot earns its place quickest. An admissions office in the United States staffing one Mandarin-speaking counselor for a pipeline that might generate thousands of inquiries from China alone is the normal situation. The coverage gap is real: applicants in Beijing, Mumbai, Lagos, and São Paulo are researching universities on their own time, in their own languages, and the ones who can't get a clear answer in their first visit often move on to whichever competing institution gave them a clear answer faster. The 36-language support built into Asyntai means a Taiwanese applicant asking about the engineering program gets an accurate answer in Traditional Chinese at 10 p.m. local, pulled from the same catalog data that powers the English experience. The institution doesn't hire a translation team; the chatbot becomes the translation layer over content that stays in its authoritative source language.

After-hours coverage matters in higher ed more than most industries realize. Prospective students are often high schoolers doing college research after their own school day ends — which means 6 p.m. to midnight local time, when admissions offices are closed. Currently enrolled students pulling all-nighters on papers trip over administrative questions at hours nobody is staffing. International applicants operating on a twelve-hour offset from the institution's timezone have no overlap with office hours at all. A chatbot that's live twenty-four hours absorbs questions across every one of those windows, and for the ones it can't answer completely, it collects the email and routes the full transcript to the right office so the morning shift picks up already briefed.

The registrar and student services side of the equation often gets automated second, and benefits in a different way. Registrar questions cluster around a predictable set of workflows — how to add or drop a class, how to request an official transcript, how withdrawal affects financial aid, how to declare a minor, how to appeal a grade. Most of these are fully documented in the student handbook, but handbooks are long, students don't read them cover to cover, and the registrar counter absorbs the search-layer labor. Training the chatbot on the handbook and related policy pages turns it into a conversational handbook search: students ask "can I drop a class in week 8 without a W," get a direct answer grounded in the official policy, and only escalate to a human when their situation is genuinely outside the documented path.

Financial aid is a delicate category that rewards careful configuration. The chatbot can and should answer general questions — FAFSA deadlines, what cost of attendance includes, how satisfactory academic progress works, the difference between subsidized and unsubsidized loans — because these are policy-level questions with official answers. It should not quote specific award amounts, make promises about individual aid packages, or handle appeals. The custom instructions feature lets you set those guardrails explicitly: "Answer general aid policy questions with citations; for any question involving an individual's aid package, decline and escalate to the aid office with the student's contact info." This keeps the chatbot useful on the 70% of inbound aid questions that are policy-level while protecting the institution from the 30% where only a qualified counselor should speak.

IT helpdesk deployment is a quieter but fast-payback use case. Campus IT fields the same VPN questions, the same LMS login resets, the same wifi setup walkthroughs, the same printing problems, and the same "I forgot my password" requests, often several times a day. Training a chatbot on the IT knowledge base — the password reset page, the VPN setup guide, the LMS student portal documentation, the wifi enrollment instructions — lets students and faculty self-serve the common cases. Tickets that reach human IT staff become the genuinely complex ones: hardware failures, account lockouts that need privileged reset, specialized software install issues. For IT departments that run on lean staff, this is often the difference between a manageable queue and a perpetually backlogged one.

Student portal deployments that enable the User Context feature (available at the Standard tier and above) are where the university chatbot shifts from informational helper to personalized advisor. When a logged-in student opens the chatbot from inside the portal, your application can pass their record — program, year, expected graduation term, advisor assignment, current registration status — into the widget's context. The assistant then tailors answers: a junior biology major asking about graduation requirements gets an answer grounded in their specific degree audit category, not a generic "see your advisor" deflection. A first-year international student asking about on-campus employment gets an answer that knows their F-1 status and points to the right employment authorization pathway. This is the difference between a chatbot that reads the handbook and a chatbot that reads the handbook and knows something about the person asking.

The leads and escalation pipeline deserves specific attention for enrollment teams. When a prospective student asks the chatbot a question and doesn't leave an email, nothing happens — that's appropriate for an informational interaction. When they ask a question the chatbot can't fully resolve, or they opt into follow-up contact, their email and the full conversation land in the Asyntai dashboard with optional email notifications to whoever is monitoring. For admissions, this is effectively an enrollment lead capture layer: anonymous visitors who engaged meaningfully with the chatbot can be followed up on by human counselors, prioritized by the questions they asked and the depth of engagement. Admissions CRMs can pull these records manually or by email notification into the regular prospect funnel.

Content freshness is a university-specific operational concern because so much of the content changes on an academic calendar. Application deadlines move year to year. Tuition resets annually. Course catalog revisions happen every spring for the coming fall. Scholarship programs launch and retire. A stale chatbot answering last year's application deadline is worse than no chatbot at all, because the error gets repeated at scale. The re-train workflow on Asyntai is intentionally cheap to run — re-crawl your content from the dashboard, upload the revised catalog PDF, and the assistant serves updated answers within minutes. Many institutions schedule a quarterly re-train as part of the standard academic operations calendar, with ad-hoc refreshes when specific policy updates ship between quarters.

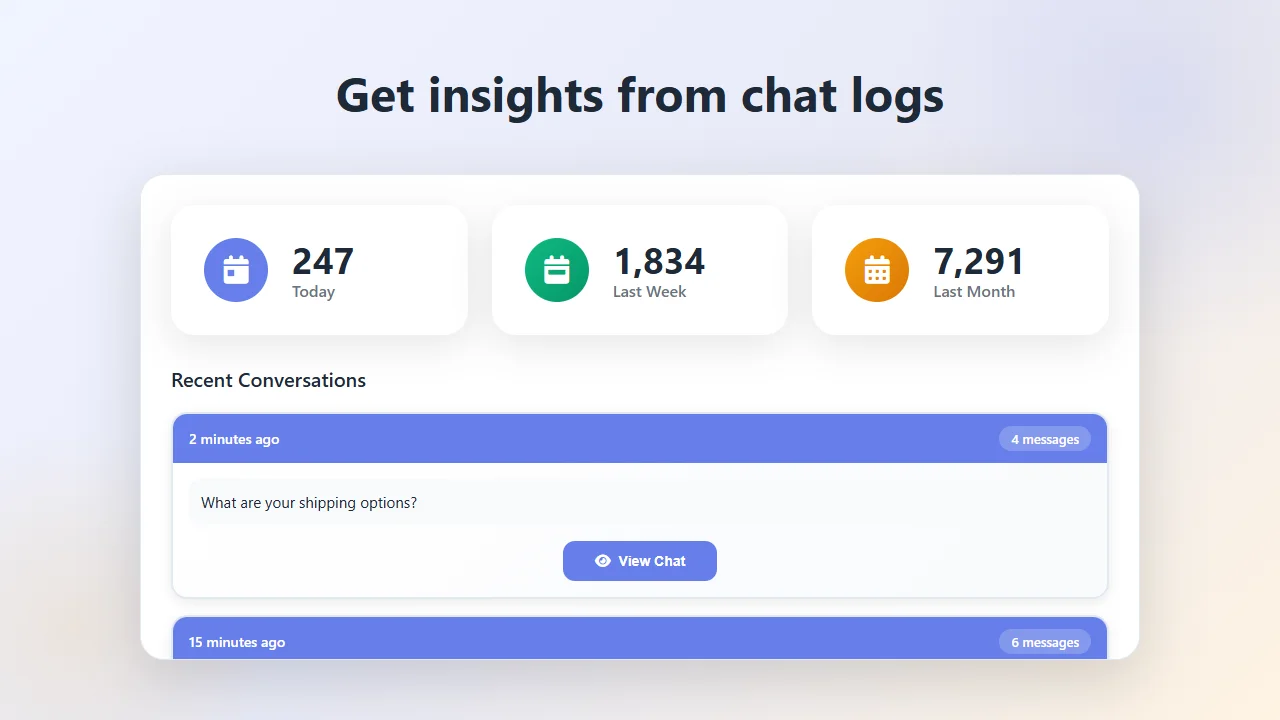

Analytics on conversation patterns give enrollment and student services teams a signal they rarely have access to otherwise. Traditional web analytics show which pages got visited; they don't show which questions visitors had in their heads when they got there. The chatbot transcript log is the opposite: it's a direct record of what prospective and enrolled students are actually asking, in their own words, clustered around the topics they care about. Over a semester, patterns emerge — a spike in questions about a specific policy that the website doesn't address clearly, a recurring confusion about a deadline that should be reworded, an international student cohort asking about something the catalog never covered. Those findings become a ranked agenda for content and process fixes that chip away at the recurring inbound load.

Rolling the chatbot out campus-wide is usually a staged process rather than a single deployment. Most institutions start with admissions because it's the highest-volume, most content-answerable surface and the team closest to the value proposition. A pilot there over an application cycle builds institutional comfort and surfaces the escalation and custom instruction patterns that work for your specific voice. From there, expansion typically goes to the registrar and student services site, then the IT helpdesk and library, then logged-in student portal deployment with User Context. By the end of a phased rollout, one chatbot — trained on the full institutional corpus, fluent in 36 languages, running around the clock — is absorbing a meaningful share of every repetitive inbound question that higher education has always paid human labor to answer manually.