Knowledge base chatbot that actually reads the docs for your users

Asyntai offers a knowledge base chatbot that ingests your help center, documentation, and uploaded PDFs — then answers users in natural language, instantly, in 36 languages. No decision trees, no manual article tagging.

Try the knowledge base chatbot on your docs site

Paste your help center or docs URL and watch the chatbot answer real user questions using your content

Reads your help center, articles, and documents as one knowledge layer

A knowledge base chatbot only works if it has the full knowledge base. Asyntai crawls your public help center or docs site, accepts PDF and text uploads for anything private, and treats all of it as a single searchable layer the AI can pull from — article by article, paragraph by paragraph.

- Crawls your public help centerZendesk Guide, Intercom Articles, Help Scout Docs, Notion public sites, GitBook, Readme, custom docs — any publicly crawlable help content becomes part of the knowledge base.

- Accepts PDFs and pasted textInternal runbooks, staff-only procedures, engineering wikis, partner SOPs, product briefs — added as documents the chatbot references alongside public docs.

- Re-index on demandWhen articles update or new docs ship, re-train the knowledge base and the chatbot's answers update — no retraining cycle or model fine-tuning involved.

Visitors ask in their words, not in your article titles

Traditional knowledge base search punishes users for not knowing the exact phrasing of your article titles. A knowledge base chatbot flips that — the user asks however they think, the AI understands intent, and the answer comes back in plain prose with the article as a citation.

- Intent-based answering"Why isn't my invite email arriving" and "teammate didn't get the invitation" both pull from the same invitation troubleshooting article.

- Cites the sourceThe chatbot can reference the specific article it drew the answer from, so users who want the full context can click through.

- Works in 36 languagesNon-English users can ask in their own language even if your knowledge base articles are English-only — the AI handles the translation layer on the fly.

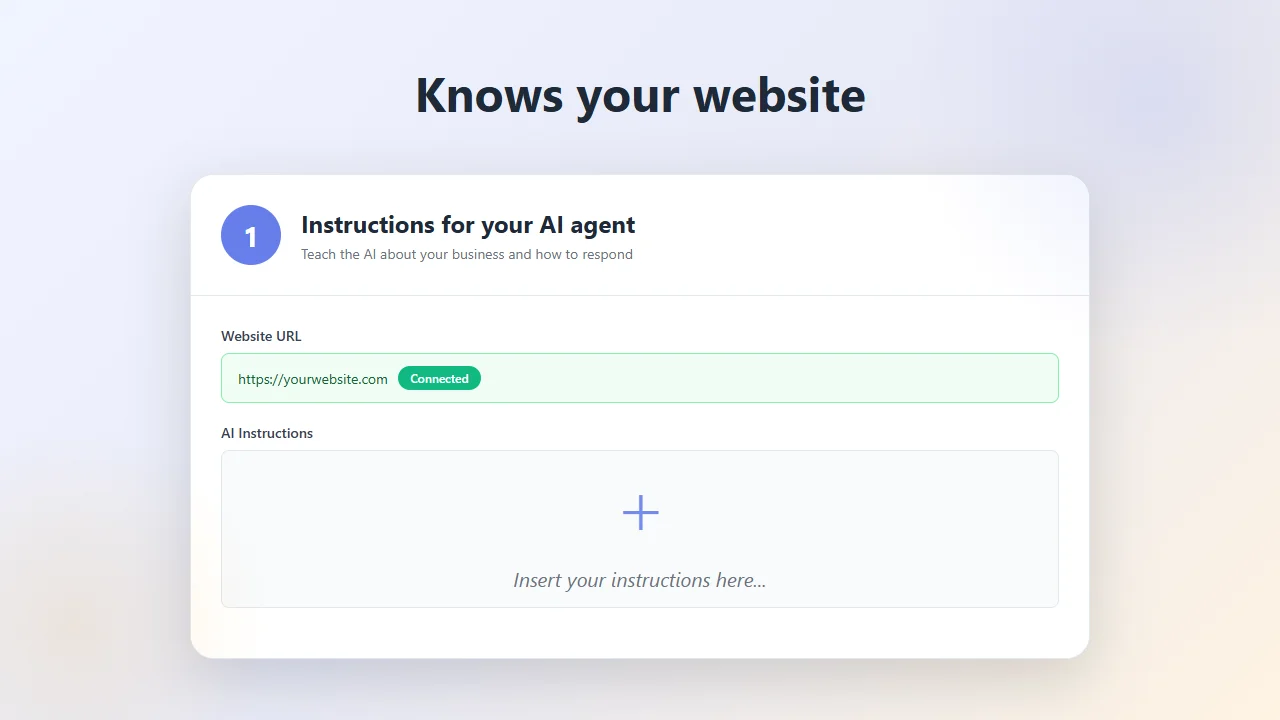

Drop the knowledge base chatbot into your help center in minutes

The knowledge base chatbot installs as a single JavaScript snippet — paste it into the header of your help center, docs site, or product app, and the chat is live everywhere it's loaded. Works with Zendesk Guide, Intercom, custom docs sites, and in-app installs.

- Sign up for a free Asyntai account and copy your snippet from the dashboard.

- Paste the snippet into the

<head>of your help center or docs site — through theme settings, a header injection feature, or the template directly. - Point Asyntai at your help center URL and upload any internal docs you want the chatbot to reference.

- Write a few custom instructions about citation style, escalation rules, and tone — then go live.

<script src="https://asyntai.com/widget.js"

data-id="your-site-id" async>

</script>

</head>

# Knowledge base chatbot live on every help center page.

Knowledge base chatbot — FAQs

What support, docs, and product teams usually confirm before rolling this out.

Which knowledge base platforms does this work with?

Any platform with a publicly crawlable help center or docs site. Zendesk Guide, Intercom Articles, Help Scout Docs, GitBook, Readme.com, Notion public pages, Document360, custom documentation sites — as long as the articles are reachable by a crawler, Asyntai can ingest them. For private docs or internal knowledge bases that aren't exposed to the web, you upload the content as PDFs or paste it in as text.

How does this compare to a help center's built-in search?

Built-in help center search is keyword-matched and usually returns a list of articles. The knowledge base chatbot instead returns a direct answer synthesized from the relevant article, in natural language, often referencing the article as a citation. For users who don't know the exact phrasing your articles use, the conversational approach typically finds the right answer in one exchange instead of three search refinements.

Can the chatbot handle private or internal knowledge bases?

Yes, by upload. Internal SOPs, staff-only runbooks, private onboarding guides, unpublished engineering docs — upload them as PDFs or paste the text directly into the Asyntai knowledge base. The chatbot treats public and private content as one layer when answering.

How often does the chatbot need to be retrained?

Whenever your content changes meaningfully. There's no fixed retraining cycle — you re-crawl your knowledge base from the Asyntai dashboard when new articles are published, existing articles are rewritten, or major product releases ship with doc updates. The re-index takes minutes, not hours, and the chatbot's answers update immediately.

Does it work in multiple languages?

Yes — 36 languages. If your articles are written in English but you have international users, the AI can answer their questions in French, German, Japanese, Portuguese, Spanish, or any of the supported languages. The chatbot does the language conversion on the fly, using your English content as the source of truth.

Can users reach a human when the chatbot can't help?

Yes. The chatbot captures the user's email and the full conversation context when it can't fully resolve a question, and routes the handoff to your support inbox (via the Asyntai dashboard and real-time email notifications). Your team picks up the conversation with all the context the chatbot gathered — no "please start over" tickets.

Will the chatbot slow down my help center?

No. The widget loads asynchronously after your page renders and doesn't block the initial paint. It behaves like any other third-party script on your help center — a Google Tag, a Meta Pixel, an analytics tool.

Can I use this on multiple help centers or product docs?

Yes on paid plans. Free: 1 site, Starter: 2, Standard: 3, Pro: up to 10. Each knowledge base gets its own separately trained chatbot with its own content, custom instructions, and branding — useful for companies with multiple products, multi-region docs, or agencies managing help centers across clients.

Knowledge base chatbot — why it works where search doesn't

Most knowledge bases die quietly. A support team builds two hundred articles over two years, indexes them in a sidebar, writes a friendly intro page, and the usage stats never recover. Users land on the help center, type a question into the search bar, get seven articles ranked by whatever keyword-match algorithm the platform uses, skim the first one, decide it's not quite right, skim the second, give up, and either submit a support ticket or leave. All the work that went into writing the articles — the explanations, the screenshots, the troubleshooting steps — sits there unread because the discovery layer is broken. A knowledge base chatbot exists to fix exactly that failure: it reads the articles the user would have had to search for, synthesizes an answer to the specific question they asked, and hands them the result in a conversation instead of a search results list.

The underlying problem with traditional help center search is that it punishes users for not knowing your internal vocabulary. If your article is titled "Resetting your API Credentials" but the user searches for "my token stopped working," the match is weak and the article gets ranked below something less relevant. If your article uses "workspace" and the user searches for "team account," same failure. Keyword search relies on the user approximating the article's phrasing. A knowledge base chatbot trained on the content layer bypasses this entirely. The user asks in whatever words make sense to them — "my token stopped working," "the API started returning 401," "I can't authenticate anymore" — and the AI maps all of those to the same underlying article and produces an answer grounded in its content.

Setup is the step where most knowledge base chatbot projects get stuck on traditional tools. Old-school approaches required manually tagging articles, building intent-to-article mappings, or creating decision trees of probable user journeys. Asyntai skips all of that. You provide the URL of your help center — whether it's Zendesk Guide, Intercom Articles, Help Scout Docs, GitBook, Readme, Notion public pages, Document360, or a fully custom docs site — and the AI crawls every article it can reach. The articles become part of a single knowledge layer the chatbot queries on every user question. No tagging, no mapping, no journey curation. The chatbot is usable within an hour of account creation.

Private knowledge matters for most real support operations, and this is where the knowledge base chatbot earns its keep on more than public-facing help. Every company has internal documentation that never makes it to the public help center — runbooks for specific customer tiers, engineering notes about known issues, staff-only process guides, unpublished product briefs, partner-specific SOPs. This content often answers questions that show up in support tickets but can't be published publicly for competitive or operational reasons. You upload those documents to Asyntai as PDFs or pasted text, and the chatbot treats them as reference material when answering internal or logged-in-user queries. For support teams, this turns the chatbot into a first-line responder that's aware of both the public docs and the internal playbook simultaneously.

Multilingual use is the other place where a knowledge base chatbot produces outsized value compared with traditional help center search. Most companies' help centers are written in a single language — usually English — because writing the same 200 articles in six languages is an operational and translation-budget nightmare. But their users are global. A German developer trying to figure out a specific API behavior reads English docs with varying ease. A Japanese product manager wading through English troubleshooting articles loses time to translation. The Asyntai knowledge base chatbot lets non-English users ask in their own language, synthesizes the answer from the English source articles, and replies in the user's language. The docs stay in English as the source of truth; the chatbot becomes the translation and comprehension layer on top. For international product teams without a localization team, this is often the only realistic path to multilingual support.

Citations are an underrated detail in knowledge base chatbot design. A chatbot that answers confidently but doesn't show its work is hard to trust, especially for technical or billing questions where users want to verify. Asyntai can reference the source article alongside the answer — "this is how to reset your API token, based on the article 'Resetting API Credentials'" — so users who want the complete context can click through and read it. The citation also prevents a common failure mode: the chatbot confidently paraphrasing something it shouldn't have pulled, because the user can immediately check whether the cited article actually supports the answer. Custom instructions let you control citation behavior — always cite, cite only for technical topics, skip citations for casual questions.

The escalation handoff is where knowledge base chatbots often disappoint support teams. Many chatbot tools treat escalation as a dead end — "I couldn't help you, please fill out this form" — which puts the user back at square one after a failed conversation. Asyntai does it properly. When the chatbot can't resolve a question, it captures the user's email and the full conversation transcript, then routes the handoff to your Asyntai dashboard and email inbox in real time. Your support agent picks up the conversation already aware of what the user asked, what the chatbot replied with, and what remained open. No "can you explain the issue again" emails. No wasted context. The knowledge base chatbot handles the long tail of content-answerable questions, and escalations arrive at your team already partially qualified.

User Context on Standard and Pro plans extends the knowledge base chatbot for logged-in users in a specific way. Your app can pass the logged-in user's data — account tier, product version, recent activity, role, relevant configuration — into a JavaScript object before the widget loads. The chatbot uses that context to give version-specific or tier-specific answers: if a user on the free plan asks about a Pro-only feature, the chatbot can acknowledge the feature exists but note they'd need to upgrade to access it. If a user on an older product version asks about a UI that's only in the latest release, the chatbot can reference both versions appropriately. None of this requires backend API integration; your app pushes whatever context you want the chatbot to use.

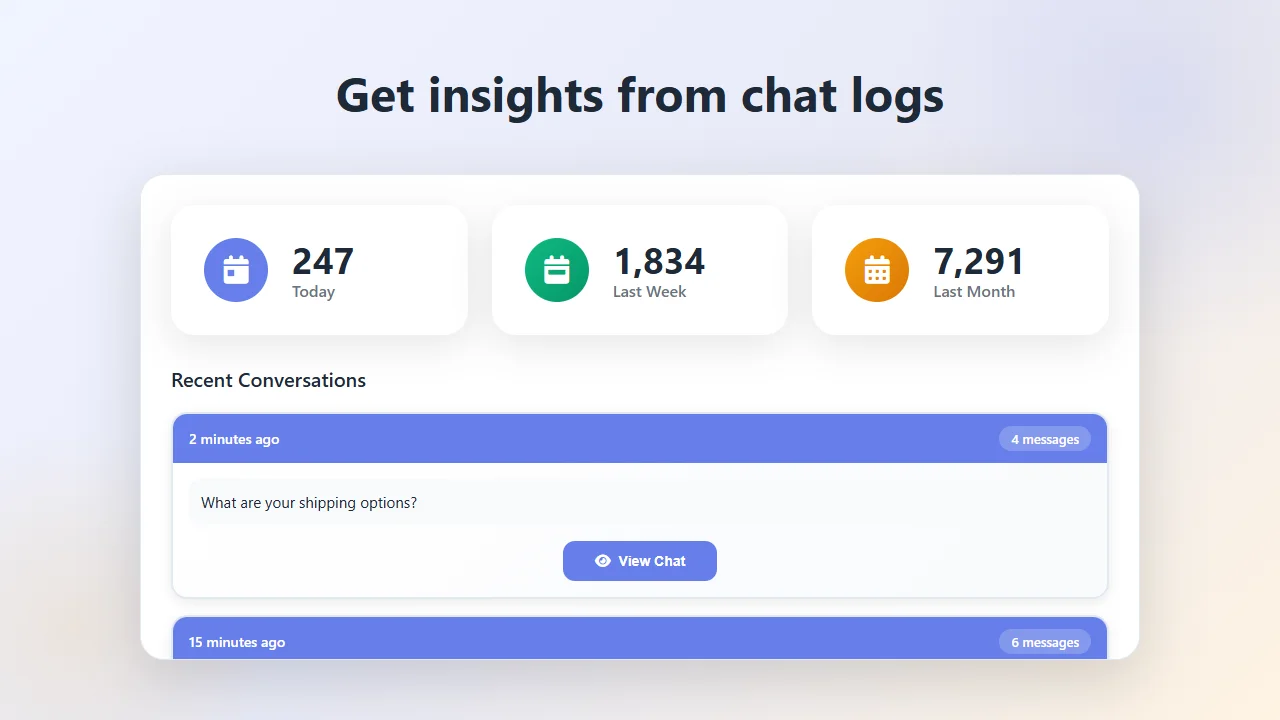

Analytics turn the knowledge base chatbot into a documentation-quality signal you didn't have before. Traditional help center analytics show you page views per article, which is a weak proxy for usefulness — an article can get many views because its title is confusing and users click through hoping for the right answer. A knowledge base chatbot's conversation log shows you what users were actually asking, whether the chatbot could answer confidently, and where the gaps are. If a lot of users keep asking about a topic and the chatbot keeps saying "I don't have information about that," you know the docs need an article written. If a specific article gets cited in answers constantly but users follow up with clarifying questions, the article needs rewriting for clarity. Over a few months, the chatbot's workload becomes a ranked list of docs improvements that would most reduce future support volume.

Pricing scales with conversation volume rather than article count or agent seats. The free tier covers 100 messages per month, enough to test the knowledge base chatbot on a smaller docs site or a single product area. Paid plans start at $39 per month for 2,500 messages, realistic for mid-sized help centers with healthy traffic. Higher tiers exist for high-volume docs sites — developer-facing docs for popular APIs, user-facing help centers for large SaaS products, customer-facing knowledge bases for enterprises with significant support load. Site limits scale with the plan (1, 2, 3, 10), which matters for companies running separate knowledge bases for different products, different regions, or different customer tiers.

Categories that benefit most from a knowledge base chatbot are predictable but worth naming. Developer-facing API documentation wins enormously because developers expect to ask natural-language questions about specific endpoints, error codes, and code samples — and the traditional "search the docs" pattern is particularly painful in that context. User-facing SaaS help centers see ticket deflection of 40–60% when the chatbot handles content-answerable questions. Technical product documentation for hardware, IoT, and complex software wins on the "how do I reset / configure / troubleshoot" class of questions. Internal employee knowledge bases reduce ops and HR volume on "how do I request / submit / access" questions. Higher education and training platforms win on course-content and policy questions. Any operation where a meaningful share of inbound questions can be answered by content already written benefits disproportionately from adding a knowledge base chatbot on top.

Deploying the knowledge base chatbot doesn't require a migration plan. Paste the snippet into your help center or docs site header. Point Asyntai at your content URL. Upload any internal docs the chatbot should reference. Write a handful of custom instructions about citation style, escalation thresholds, and tone. Test real user questions to make sure the answers land right. Go live. Most teams complete the full setup in under a day, and the quality of answers improves over the following weeks as you add custom instructions for edge cases the initial setup didn't cover. From there, the chatbot works quietly — resolving the content-answerable majority of user questions, capturing escalations with context, surfacing the docs that need writing, and turning your knowledge base from a passive library into an active first-line support layer.